# 1. Generate CIs with autoscale for a visually-comparable set of images.

# Useful for rating-task stimuli or figure panels: the strongest-signal

# CI uses the full display range and weaker-signal CIs scale proportionally.

cis <- rcicr::batchGenerateCI2IFC(

data = responses,

by = "participant_id",

stimuli = "stimulus",

responses = "response",

baseimage = "base",

rdata = "stimuli.RData",

save_as_png = TRUE,

scaling = "autoscale"

)

# 2. Compute infoVal per participant. Operates on ci$ci internally.

iv <- sapply(cis, function(ci) {

rcicr::computeInfoVal2IFC(ci, rdata = "stimuli.RData")

})

# 3. Pixel-wise similarity between two CIs. Compute on the raw CI.

r <- cor(c(cis[[1]]$ci), c(cis[[2]]$ci))Choosing a CI scaling option in rcicr

If you’ve ever generated a classification image (CI) with rcicr (Dotsch, 2023), you’ve had to pick a scaling argument. There are five options and the documentation mostly tells you what each one does, not which one to use for what. After running into this question more times than I’d like to admit, I wrote down the answer for myself and thought I might as well share with the research community.

This post is a standalone version of the CI scaling options chapter in the rcisignal user’s guide. Worth reading even if you don’t use that package.

What scaling is and why do we need it

When rcicr::batchGenerateCI2IFC() (or generateCI2IFC()) computes a CI, the underlying math produces a 2D pixel array whose values are typically centred near zero and span a small range, often somewhere between -0.01 and +0.01, depending on signal strength and number of trials. These numbers are the real signal: they encode how much each pixel contributes to the participant’s mental image.

The problem is that they cannot be displayed as-is. Monitors render pixel intensities between 0 (black) and 1 (white); negative values have no display meaning, and the absolute values would be too small to see anyway.

Scaling solves this display problem by remapping the CI into a range a monitor can render. Every CI that rcicr returns therefore has two parallel fields:

ci$ci: the raw CI, exactly as produced by the weighted sum of noise patterns. This is your data.ci$scaled: the rescaled CI, after one of the transformations below. This is what gets saved as a PNG or combined with the base image.

The raw field is unchanged regardless of which scaling option you pass. Only the scaled field changes. Keep that in mind, it’s the load-bearing fact for everything below.

The five options

| Option | What it does | When to consider it |

|---|---|---|

none |

Leaves the CI unchanged in $scaled. |

Numerical inspection; any computation you want in raw CI units. |

constant |

\((CI + c) / (2c)\) with \(c\) fixed (default \(c = 0.1\)). The same \(c\) gives identical mapping across CIs. | Visual comparability across CIs when you want to preserve relative intensity and have within-study control over the display scale. |

independent |

Same formula, but \(c = \max\|CI\|\) computed separately for each CI. Each CI is stretched to \([0, 1]\) using its own range. | Maximum visual contrast for a single CI viewed alone. Not for comparisons across CIs. |

matched |

Linearly remaps the CI to match the base image’s pixel range. | Overlaying the CI on the base image; rating-task stimuli that should blend with the base face. |

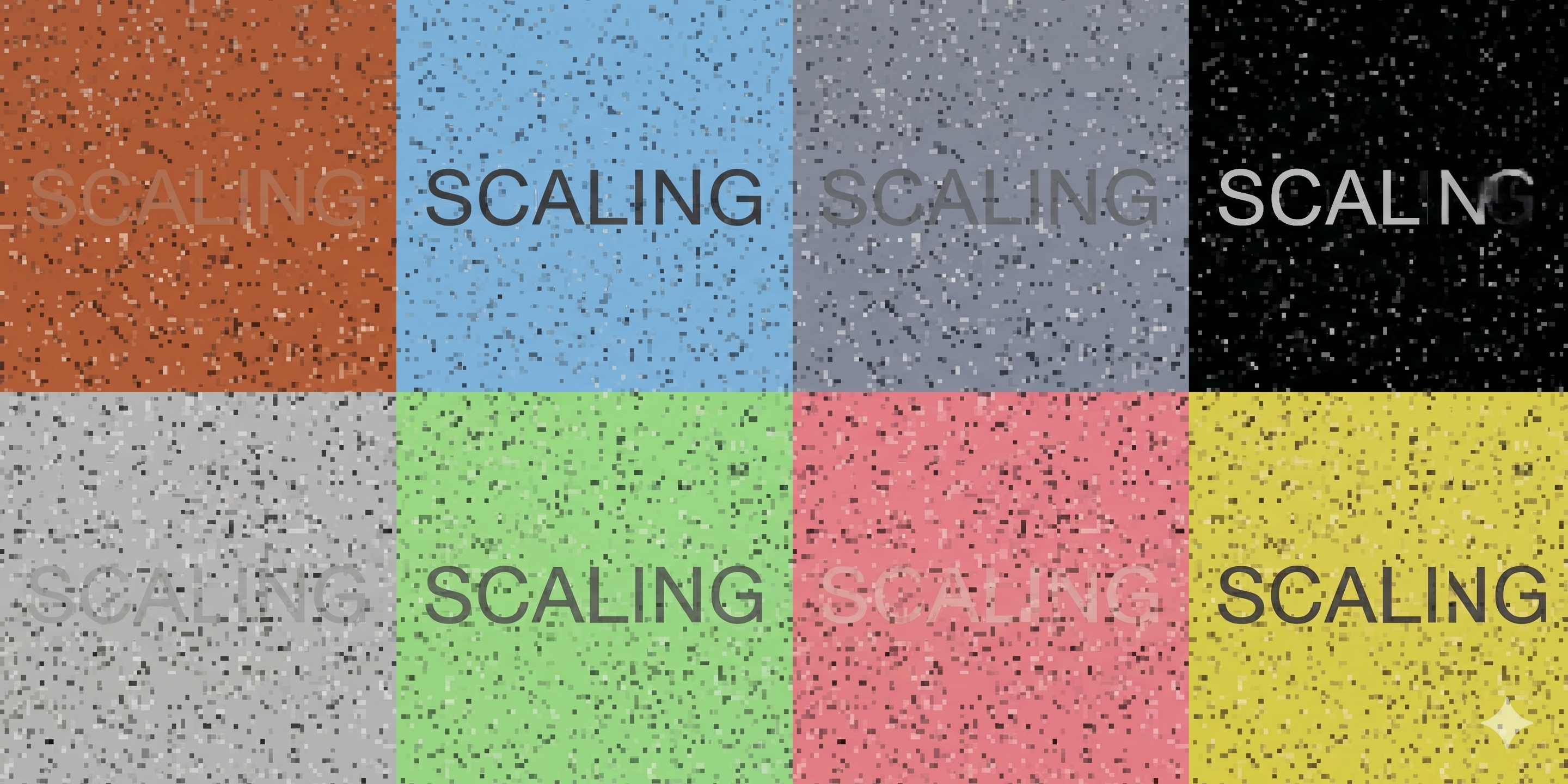

autoscale |

Looks across every CI in the batch, finds the widest-range one (the largest \(\max\|CI\|\)), and uses that single value as the shared scaling constant \(c\) for every CI. The strongest-signal CI then occupies the full display range and weaker-signal CIs occupy proportionally less of it, which preserves real intensity differences between CIs. | When you want a set of CIs to be visually comparable to each other within the same study: figure panels of group-level CIs across conditions, or a batch of CIs that will be used as stimuli in a downstream rating task. |

A small detail that catches people out: the default scaling is different across functions. batchGenerateCI2IFC() defaults to "autoscale"; generateCI2IFC() defaults to "independent". Two pipelines that both claim to use “rcicr defaults” can therefore produce visually different images from the same underlying raw CIs. Document which one you used.

Which scaling for which analysis

The short version: numerical analyses operate on ci$ci; visualization choices operate on ci$scaled. The scaling argument only affects the second.

The longer version, by use case:

| Your goal | Correct choice | Reasoning |

|---|---|---|

| Computing infoVal | Any scaling | rcicr::computeInfoVal2IFC() reads ci$ci internally. The scaling argument has no effect on the returned z-score. |

| Pixel-wise correlations between CIs (similarity) | Use ci$ci |

Pearson correlation is preserved only under uniform linear rescalings. independent and matched apply CI-specific rescalings, which distort correlations. |

| Euclidean distance between CIs (e.g., the objective discriminability ratio in Brinkman et al., 2019) | Use ci$ci |

Same reasoning. CI-dependent rescalings change distances non-uniformly. |

| Producing CIs for a trait-rating task | autoscale for one within-study batch, or constant with a fixed \(c\) if you need consistency across studies |

Raters should perceive genuine signal differences between CIs. independent defeats this because every CI is stretched to the same display range; a weak-signal CI then looks as intense as a strong-signal CI. |

| Saving PNG figures for a paper | matched for base-image overlays; autoscale for grids of CIs |

Pick one and document it. Mixing options across figures in the same paper makes them visually incomparable even when the underlying data are identical. |

| Pixel / cluster statistical tests (Chauvin et al., 2005) | rcicr::plotZmap() (handles the raw CI internally) |

Do not hand-construct a z-map from ci$scaled. |

| Reporting CI magnitude or effect size | ci$ci |

Scaled CIs have arbitrary units determined by the option chosen. |

Common pitfalls

These are the four mistakes I keep running into in the wild (and have made myself).

Correlating scaled CIs. Calling

cor()on twoindependent-scaled CIs correlates images that have been stretched to the same \([0, 1]\) range by different constants. The result is no longer a clean pixel-wise agreement. Compute correlations on the raw CI.Using

independentfor rating-task stimuli. A participant who responded weakly produces a low-signal CI. Underindependent, that CI gets stretched to the same display range as a strong-signal CI. Raters perceive both as equally intense, and rating-based analyses systematically underestimate signal differences across participants.Mixing scaling options across studies. Two papers that both claim to use “rcicr defaults” are not necessarily comparable:

batchGenerateCI2IFC()defaults toautoscale,generateCI2IFC()defaults toindependent. Always document the scaling option used in your methods section.Believing that scaling affects infoVal. It does not.

computeInfoVal2IFC()always reads the raw CI. If two analyses give different infoVal values, the cause is elsewhere (in the response data, in the reference-distribution cache, or in the trial count), not in scaling.

Recommended defaults

When in doubt:

- Figures in a paper:

autoscalefor grids of CIs;matchedfor base-image overlays. Document the choice in methods. - Rating-task stimuli:

autoscalewithin a single batch; otherwiseconstantwith a fixedcdocumented in methods. - Any numerical analysis (infoVal, correlations, distances, effect sizes, pixel tests): compute from

ci$ci, regardless of the scaling argument passed.

A typical R workflow

The PNGs from step 1 are visually comparable across participants because autoscale applies one shared constant across the batch (derived from the widest-range CI), so genuine differences in signal strength remain visible. The statistical results in steps 2 and 3 are computed on the raw CI and are independent of the scaling argument.

TL;DR

Pick a scaling option for your figures and stimuli, document it, and never use the scaled CI for statistics. The raw CI is the data; the scaled CI is the picture.

Thanks for reading!

References

Brinkman, L., Goffin, S., van de Schoot, R., van Haren, N. E. M., Dotsch, R., & Aarts, H. (2019). Quantifying the informational value of classification images. Behavior Research Methods, 51(5), 2059–2073. https://doi.org/10.3758/s13428-019-01232-2

Chauvin, A., Worsley, K. J., Schyns, P. G., Arguin, M., & Gosselin, F. (2005). Accurate statistical tests for smooth classification images. Journal of Vision, 5(9), 659–667. https://doi.org/10.1167/5.9.1

Dotsch, R. (2023). rcicr: Reverse correlation image classification toolbox (Version 1.0.1) [R package]. https://github.com/rdotsch/rcicr